Stephen Mitchell suffered from allergies. “When the trees come out, I can’t see. People stand around saying, ‘Isn’t it lovely,’ but I weep,” he told the New York Times in 1965. A thirty-five-year-old professor at Syracuse University, he found sanctuary in the temperature-controlled environment of the school’s computer center, where he surprised many people by showing how computers could be used to advance work in the humanities.

Each year, the Modern Language Association compiled a bibliography of every book, article, and review published during the year prior. Assembling the bibliography from more than 1,150 periodicals and making the accompanying index was an enormous undertaking, and it was all done by hand. Mitchell thought he could automate the process, and MLA agreed to let him try. He spent weeks translating the names of editors, translators, and authors into punch cards and writing the program to interpret the data. Then it was all over in twenty-three minutes. That’s how long it took the computer to compile and print the index, which ran to 18,001 entries.

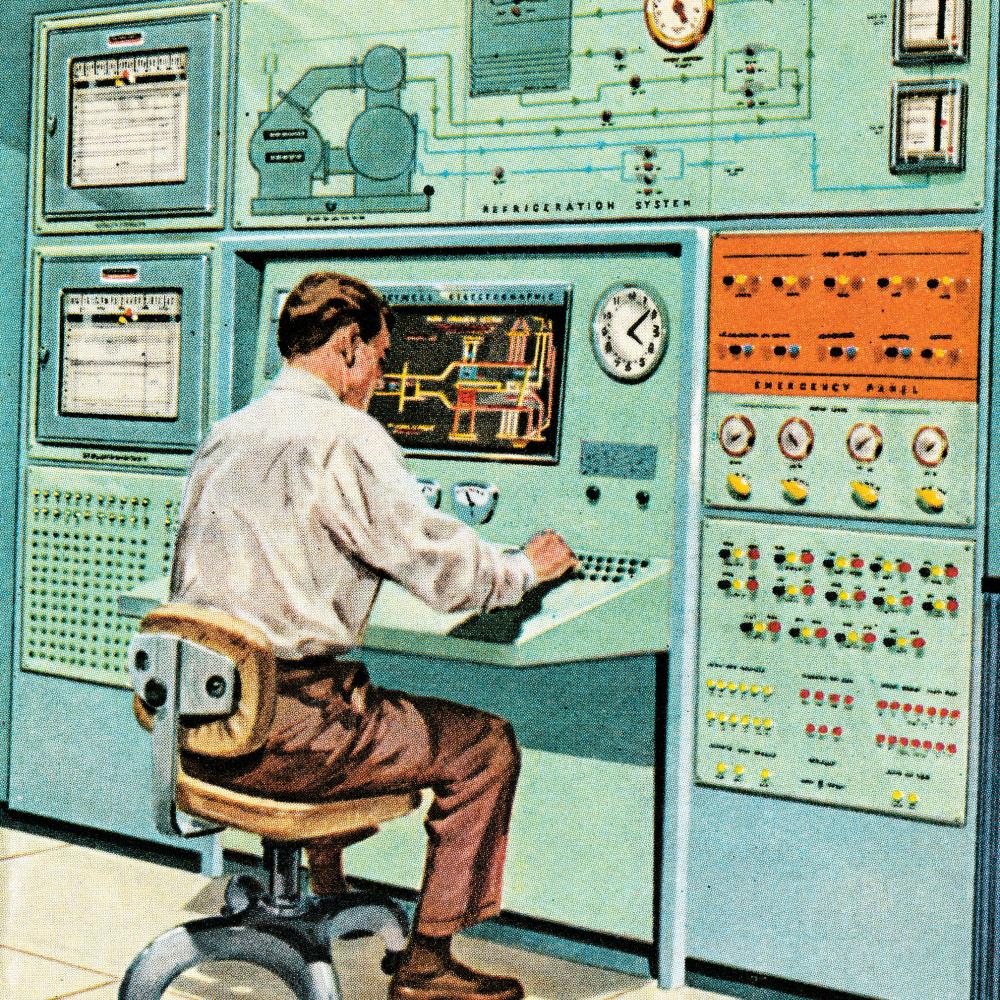

During the previous fifteen years, computers had been making inroads into the humanities, emerging as more than a passing curiosity by the mid 1960s. The digital humanities—or “humanities computing,” as it was then known—used machines the size of small cars, punch cards, and data recorded on magnetic tape. To many scholars, its methods, which depended on breaking down texts into data elements, seemed alien, as did the antiseptic atmosphere of the computer lab. But for those who didn’t mind working away from the comforting smell of musty old books, a new field was opening up, a hybrid discipline that would receive significant assistance from the National Endowment for the Humanities, which made its debut in 1965.

A PRIEST AND A SCIENTIST WALK INTO A COMPUTER LAB . . .

In the years following World War II, the sciences—physical, biological, and social—embraced computers to work on complex calculations, but it took the humanities a little longer to see the value of computing. One of the biggest challenges for humanists was the question of how to turn language, the core operating system of the humanities, into numbers in order to be compiled and calculated. At this point in the history of computing, all data had to be in numerical form. It’s not sur-prising, then, that some of the first humanities projects were indexes and concordances, since the location of a word could be given a numerical value.

The first concordance was made for the Vulgate Bible, under the direction of Hugo of St-Cher, a Dominican scholar of theology and member of the faculty at the University of Paris. When completed in 1230, the Concordantiae Sacrorum Bibliorum enabled the Dominicans to locate every mention of “lamb” or “sacrifice” or “adultery.” According to legend, five hundred monks toiled to complete the concordance. Herein lay the challenge of making concordances and indexes: You either had to command a team of multitudes or be willing to devote yourself to the project for years. John Bartlett, he of the Familiar Quotations, spent two decades working with his wife on the first full Shakespeare concordance. The volume, given the unwieldy name of New and Complete Concordance or Verbal Index to Words, Phrases and Passages in the Dramatic Works of Shakespeare, with a supplementing concordance to his poems (1894), ran to 1,910 pages.

The story of digital humanities often begins with another theologian on a quest to make a concordance. In the mid 1940s, Father Roberto Busa, an Italian Jesuit priest, latched onto the idea of making a master index of works by Saint Thomas Aquinas and related authors. Busa had written his dissertation on “the metaphysics of presence” in Aquinas. Looking for the answer, he created 10,000 hand-written index cards. His work demonstrated the importance of how an author uses a particular word, especially prepositions. But making an index for all of Aquinas’s works required wrangling ten million words of Medieval Latin. It seemed an impossible task.

In 1949, Busa’s search for a solution led him to the United States and International Business Machines, better known as IBM, which had a patent on the resources Busa needed to realize his project. Without the company’s help, his vision for a master concordance would remain just a dream.

Before meeting with Thomas J. Watson Sr., IBM’s president and founder, Busa learned that company staff had issued a report saying that what he wanted to do was impossible. As he waited outside Watson’s office, Busa spied a poster for IBM emblazoned with the slogan, “The difficult we do right away; the impossible takes a little longer.” Busa took the poster into the meeting. “Sitting down in front of him and sensing the tremendous power of his mind, I was inspired to say: ‘It is not right to say ‘no’ before you have tried,’” Busa later wrote. “I took out the poster and showed him his own slogan.” Watson agreed to help, provided that IBM didn’t turn into “International Busa Machines.”

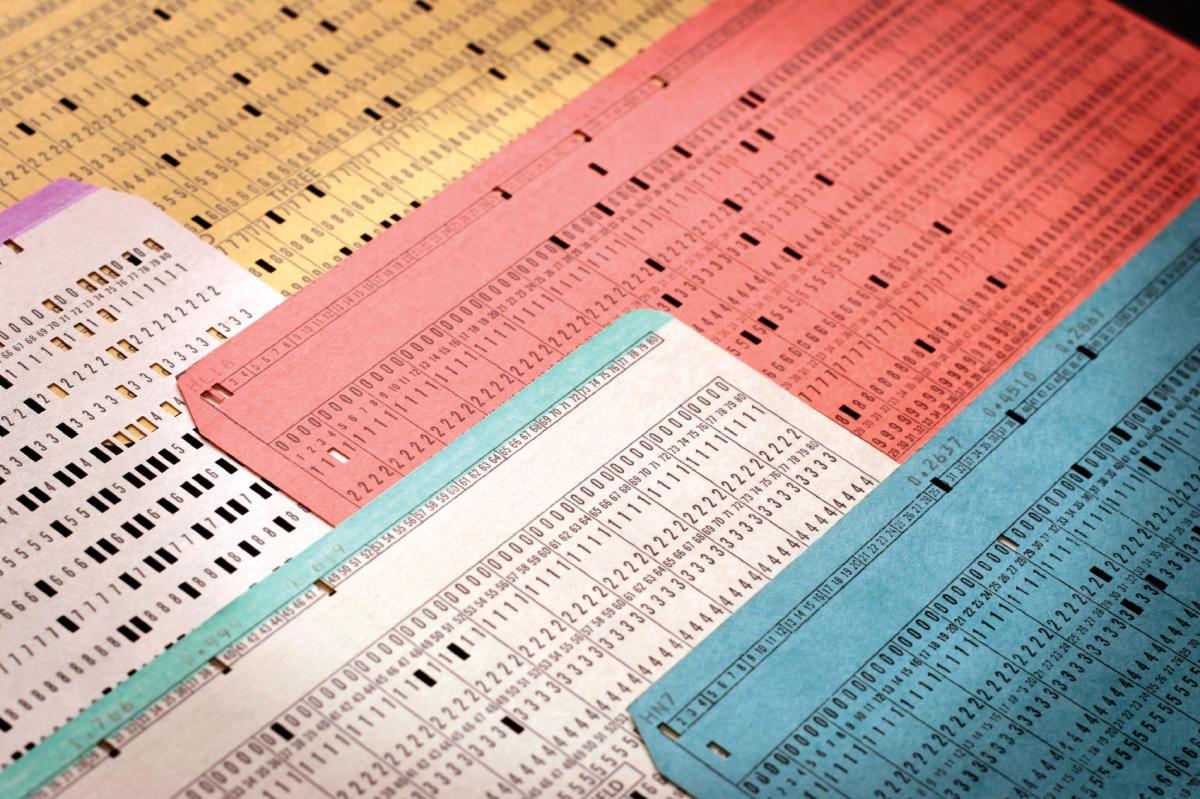

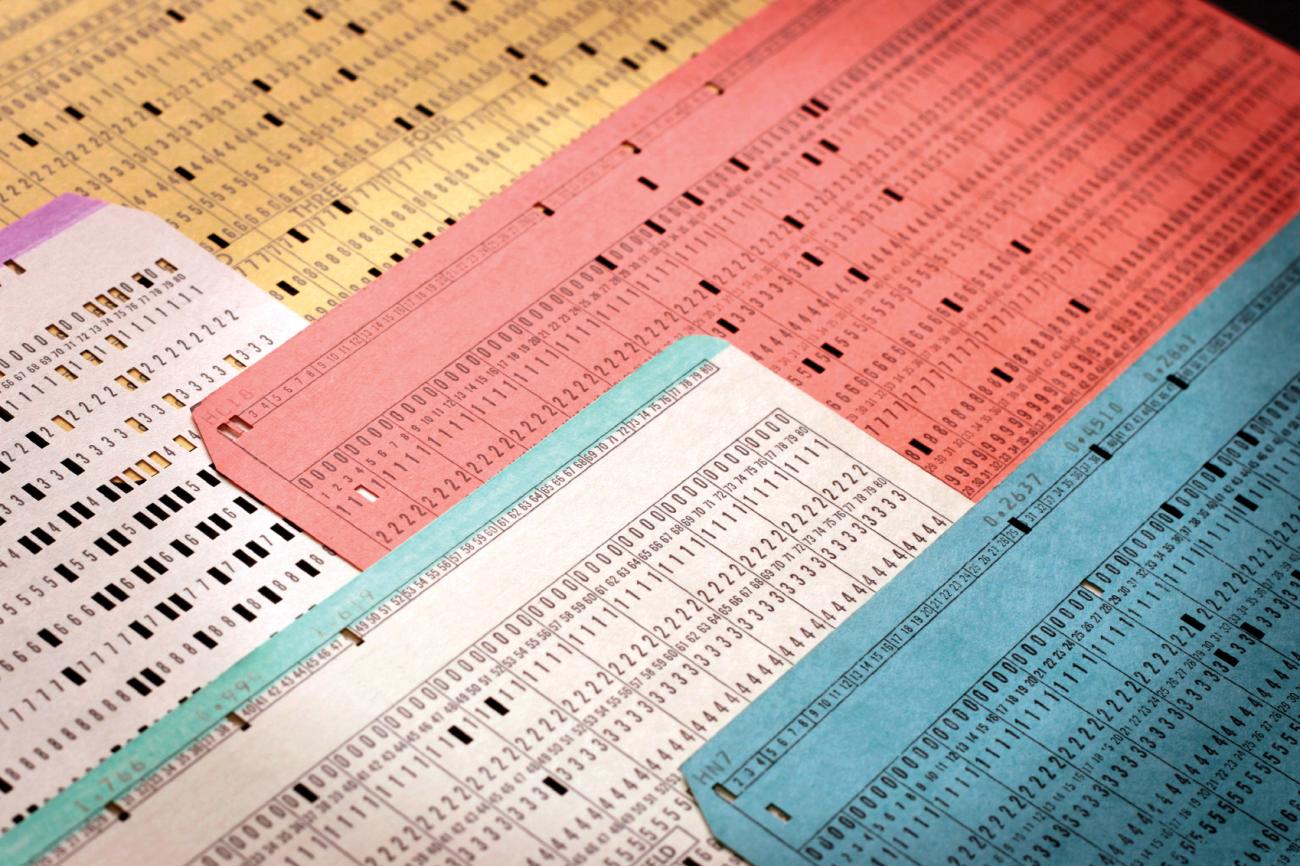

By 1951, Busa had used IBM’s accounting machines and a selection of Aquinas’s writing—his poetry—as a proof of concept. Poetry was ideal because punch cards at that time could fit only eighty characters—just enough for a verse and the word (or lemma) being noted. In the introduction to Varia Specimina, the resulting index, Busa outlined the five-step process required for making a concordance:

1. transcription of text, broken down into phrases, on to separate cards;

2. multiplication of the cards (as many as there are words on each);

3. indicating on each of the resulting cards the respective entry (lemma);

4. selection and alphabetization of all cards purely by spelling; and

5. after the intelligent editing of the alphabetism, the typographical composition of the pages for publishing.

Busa proved that a punch-card machine could accomplish all five of these steps. The only manual input came at the beginning when a keyboard was used to enter in the data. (Everything was entered in twice as a typographical failsafe. If the lines varied, the entry was reviewed.) After that, the machine sorted and categorized, with Busa and his team minding the process.

Not only was the project a success, but its methods could be replicated. As Busa wrote, “In a few days the philologist, with the mere help of a technical expert for the care of the machines, will have in hand the general card file and the final proofs corrected for the printer, certain of an accuracy which could never have been guaranteed by the cooperation of man’s sensorial and psychical nerve centers.” Busa, however, had plenty of work ahead of him. It took until 1967 to punch all of the cards for what would become the fifty-six-volume Index Thomisticus.

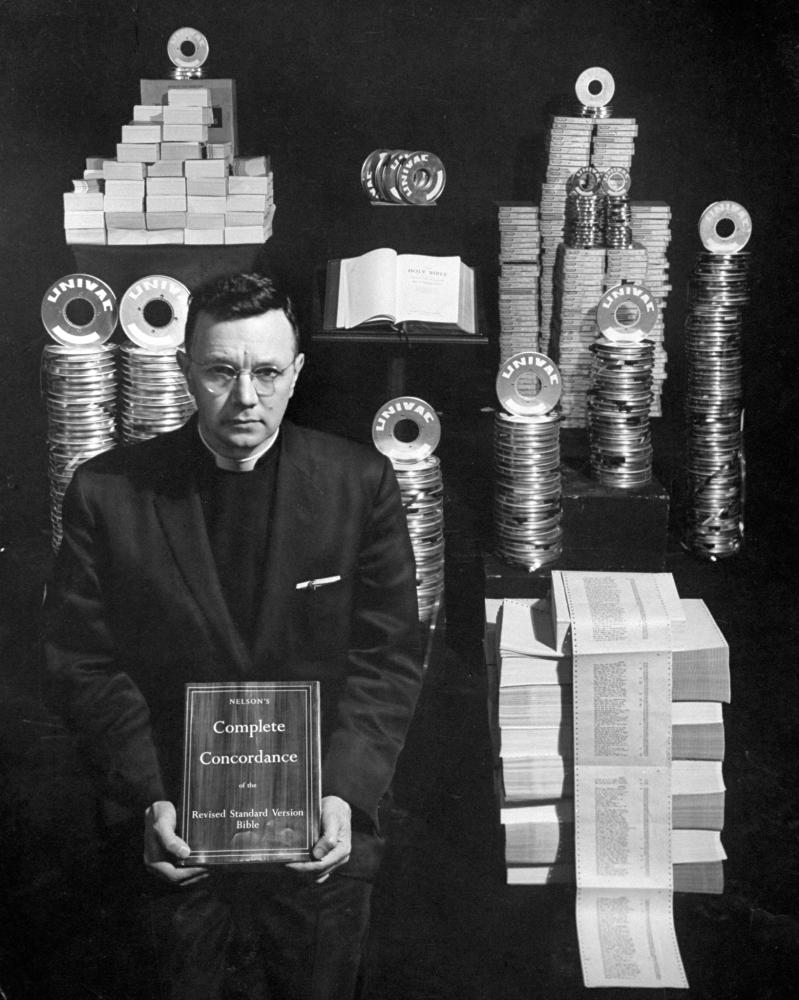

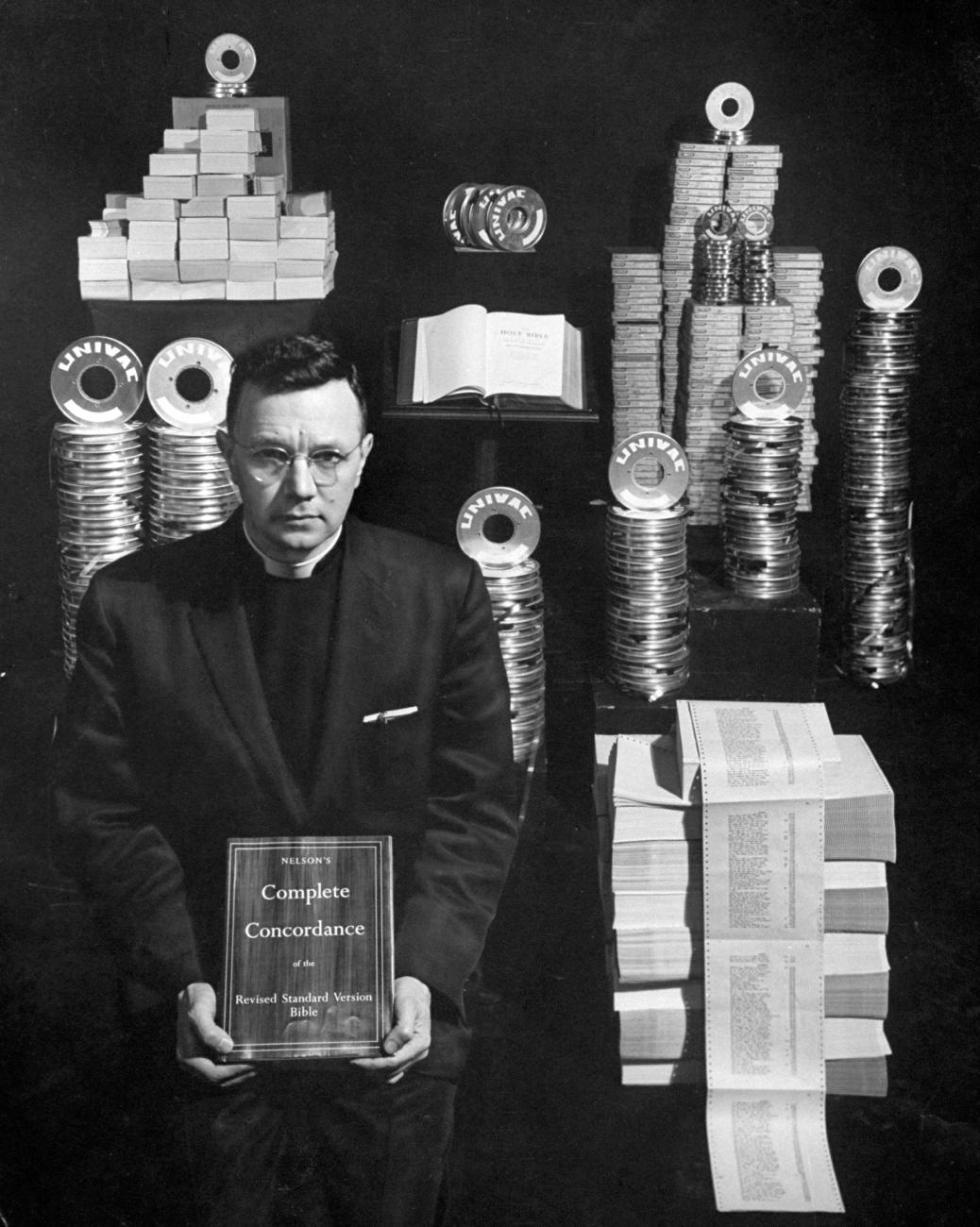

Busa wasn’t the only holy man in search of technological intervention. Reverend John W. Ellison looked to Remington Rand, an IBM competitor, for help with making a concordance for the newly available Revised Standard Version of the Bible. In 1957, Rand’s UNIVAC I computer, using 480 pounds of cards and 80 miles of tape, compiled the concordance in 400 hours. The resulting book—the first computerized concordance of the Bible—featured 300,000 entries and ran 2,157 pages. By comparison, a concordance for the King James Bible, published in 1890, had taken thirty years to complete.

Ellison’s project had also made the crucial jump from accounting machines to computers. It wasn’t long before literary scholars saw the same possibilities, aided by the arrival of computers on campuses. By the mid 1960s, scholars had created indexes and concordances of Early Middle High German texts, along with the poems of Matthew Arnold and W. B. Yeats.

Computers were also being used to decide questions of authorship, since they could count word frequency faster and more accurately than traditional hand methods. Two mathematicians, Harvard’s Frederick Mosteller and the University of Chicago’s David L. Wallace, conducted one of the most famous analyses on The Federalist. Seventy-seven of the eighty-five essays by Alexander Hamilton, James Madison, and John Jay were published anonymously. Scholars had determined that Hamilton wrote forty-three of them, Madison fourteen, and Jay five. Madison and Hamilton also wrote three of the articles jointly. But essays numbered 49 through 58 and 62 and 63 were a bit of a mystery. By comparing the disputed articles with other writings by Madison and Hamilton—both men were prolific writers, so there was no lack of material—Mosteller and Wallace determined the odds favored Madison. The key to solving the mystery was the use of filler words—also, of, by, on, this—along with “markers.” Hamilton favored the word “upon,” using it three times in every thousand words, while Madison used it only once every six thousand words. The calculations were made on an IBM 7090, the same model NASA used to direct the Mercury space flights.

In September 1964, one-hundred-fifty literary scholars and computer scientists converged on IBM’s research center in Yorktown Heights, New York, for one of the first conferences devoted to computers and literature. Sally Sedelow, a professor at St. Louis University, was working on the problem of whether style parameters could be defined enough to be programmed, using act 4, scene 1 of Hamlet as a test case. The answer was “yes.” A computer comparison of the works of Percy Bysshe Shelley and John Milton revealed that Shelley was more influenced by Milton than scholars had surmised. James T. McDonough Jr., at St. Joseph’s College in Philadelphia, envisioned a center for advanced electronic literary and linguistic study. Sounding a practical note, Martin Ray, a linguist for Rand Corporation, argued for programming standards to ensure that work done by one group would be available to others.

The following year, IBM sponsored two more conferences, one at Yale University and another at Purdue University. At the Yale conference, the skeptics made themselves known, forcing the digital humanists to argue that “putting Shelley’s poetry into a computer was not comparable to stuffing neckties into a kitchen blender.” They also worked to reassure the unconverted that mainframes wouldn’t replace scholars any time soon. Instead, computers would free scholars from drudgery, allowing the mind more time to dwell on creative and intellectual pursuits.

Stephen Parrish, a professor at Cornell and the man behind the Yeats and Arnold concordances, urged scholars to embrace the possibilities offered by “gods in black boxes”: “We have, as humanists, nothing to fear and everything to gain from coming to terms with the revolution which is the greatest single event of our time.” One of the bigger skeptics was Jacques Barzun, provost of Columbia University, who warned that the fascination of the computer could lead to “the fallacy of assessing importance by weight in numbers.” Counting symbols or other items in a text, Barzun wrote, “proves absolutely nothing but the industry of the indexer.”

In 1966, the first issue of Computers and the Humanities was published by Queens College, with support from IBM and the United States Steel Foundation. In return for subscribing, the editors promised to inform readers of conferences, introduce them to new methodologies, and connect them to people working on similar problems. “Hopefully, we can help to reduce the wasteful duplication of key-punching and programming that exists even in as small a field as computer research in the humanities.” One of the most valuable services offered by the journal was a listing of scholars working in the field and a rundown on available methodologies. A scan of the first list shows scholars using the IBM 7040, 7090, and 7094, along with magnetic tape and the ubiquitous punch card, and programming in FORTRAN and COBOL.

The journal’s editorial board was keenly aware of how “technological indulgence” might damage the discipline. Joseph Raben, professor at Queen’s College and the journal’s editor, noticed that some of his colleagues had become a little punch card drunk. “Bemused by this thrilling new speed and magnitude at their command, some humanists have proceeded to count anything and everything in the hope that totals will tell them something meaningful about literature,” he told the New York Times. Louis T. Milic, member of Columbia’s English department, worried about the potential damage done by “the illicit misshaping of literary problems into quantitative problems.” He fretted that “the atmosphere of the computer room may prove intoxicating to us,” cautioning that “the humanities should not stagger into the machine age.”

Milic’s reservations were unexpected. With A Quantitative Approach to the Style of Jonathan Swift (1966), he had become a pioneer of computer-based literary analysis. Frustrated by contradictory views on Swift’s style, he used a computer to identify grammatical patterns and tics over Swift’s corpus that might not be otherwise apparent. Milic mapped Swift’s proclivity for piling up adjectives and nouns into series of varying lengths and charted his fondness for starting sentences with “and,” “but,” and “or.” Milic also compared Swift’s style with that of other prose masters, including Joseph Addison, Samuel Johnson, Edward Gibbon, and Thomas Macaulay. Although Swift predated everyone except Addison, Milic discovered that Swift’s freewheeling approach to verb usage made him feel far more modern than the rest.

MAN ON THE MOON

In 1957, the American educational system became the focus of intense nationwide debate after the Soviets successfully launched Sputnik, the first manmade satellite to enter space. The Eisenhower administration responded with heavy investments in math and science. In April 1961, the Soviet Union again stunned the world by sending cosmonaut Yuri Gagarin into space to become the first man to orbit Earth. Weeks later, President Kennedy announced the ambitious goal of sending a man to the moon, kicking off not only a new program for NASA, but also a renewed focus on math and science.

The emphasis on what we now call STEM (science, technology, engineering, and mathematics) began to worry those in the humanities. Even before Kennedy issued his challenge,the humanities were absent from the federal government’s research budget. In fiscal year 1961, the government spent $969 million on basic research: 71 percent went to the physical sciences; 26 percent to life sciences; 2 percent to psychological sciences; and 1 percent to social science. Zero percent went to humanities research.

In 1963, three organizations—the American Council of Learned Societies (ACLS), the Council of Graduate Schools in the United States, and the United Chapters of Phi Beta Kappa—joined together to establish the National Commission on the Humanities. Barnaby Keeney, president of Brown University, served as its chairman, overseeing a mix of college presidents (Yale, University of California, Gustavus Adolphus, Notre Dame), professors, deans, and administrators. Also on the commission was Thomas Watson Jr., the chairman of IBM and son of the man who had agreed to help Father Busa fourteen years earlier, and Glenn T. Seaborg, chairman of the United States Atomic Energy Commission. Watson was an inspired choice, signaling the importance of the humanities beyond college campuses, while Seaborg proved to be a natural at explaining why the sciences needed the humanities.

The commission spent the next year studying “the state of the humanities in America.” The resulting report, issued at the end of April 1964, argued that the intense focus on science endangered the study of the humanities in both lower and higher education. “The laudable practice of the federal government of making large sums of money available for scientific research has brought great benefits, but it has also brought out an imbalance within academic institutions by the very fact of abundance in one field of study and dearth in the other.” The commission believed that investment in the humanities was in the “national interest” and urged the creation of a new independent federal agency dedicated to supporting humanities research. They wanted the humanities version of the National Science Foundation, which had been created in 1950.

In August 1964, Congressman William Moorhead of Pennsylvania proposed legislation to implement the commission’s recommendations, but the bill stalled. A month later, in a speech at Brown University, President Lyndon Johnson endorsed the idea. “The values of our free and compassionate society are as vital to our national success as the skills of our technical and scientific age. And I look with the greatest of favor upon the proposal . . . for a national foundation for the humanities.” He backed the idea again during his 1965 State of the Union address. By March 1965, the White House had taken the lead on the issue, proposing the establishment of the National Foundation on the Arts and the Humanities. Arts advocates had been waging a similar campaign, and the White House decided to merge the two efforts. The bill called for the creation of two independent agencies—one devoted to arts and one devoted to humanities—both to be advised by governing bodies composed of leaders in their fields.

Senator Claiborne Pell of Rhode Island and Representative Frank Thompson Jr. of New Jersey introduced the bill to their respective chambers, immediately attracting cosponsors. Pell told reporters that their bill represented “the first time in our history ” that “a president of the United States has given his administration support to such a comprehensive measure which combines the two areas most significant to our nation’s cultural advancement and to the full growth of a truly great society.” In mid September, Congress sent the bill to the White House for the president’s signature.

On September 29, 1965, two hundred people packed the White House Rose Garden to watch Johnson as he signed the National Foundation on the Arts and the Humanities Act into law. The guest list included actor Gregory Peck, historian Dumas Malone, photographer Ansel Adams, writer Ralph Ellison, architect Walter Gropius, and philanthropist Paul Mellon.

The task of creating the National Endowment for the Humanities from scratch fell first to Henry Allen Moe, the former president of the Guggenheim Foundation. As NEH’s interim chairman, Moe oversaw the first meeting of the National Council on the Humanities, a twenty-six-member advisory group appointed by the president, in March 1966. NEH’s growing staff settled into offices at 1800 G Street, NW, in Washington, D.C., and began the work of designing programs and evaluating applications. Barnaby Keeney, having said his goodbyes to Brown, was sworn in as the first NEH chairman on July 14, 1966, inheriting a staff of fourteen. Nine days later, NEH made its first grant to the American Society of Papyrologists for a summer institute at Yale University.

if ( (Digital + Humanities) .GE. Humanities) Fund = 1

When NEH announced its first call for grants, listed among the types of projects it wanted to support were “grants for development of humanistically oriented computer research.” The focus on computers may have come as a shock to anyone who hadn’t read the commission’s report. “The committee feels that the humanities lag behind the sciences in the use of new techniques for research, and that this lag should be overcome. All encouragement should be given to the application of modern techniques to scholarship in the humanities: the use of electronic data-processing systems in libraries; the teletype facsimile transmission of inaccessible items; the computer storage, retrieval, and analysis of bibliographies.” The commission urged that research and development funds be allotted for devising ways to help scholars “manage the flood of information on which humanistic scholarship depends.”

As part of its work, the commission had solicited reports from learned societies on the status of and challenges facing their disciplines. Twenty-four statements, many of which highlight how computers were being used, appear in the final report. Anthropologists were using computers for “the quantification of large masses of data” and predicted that their use would only increase. The American Studies Association believed that computers would help with bibliographies, while new facsimile methods would make “rare and locally unavailable items” available for research and teaching. The American Dialect Society saw computers as offering new solutions for sorting and storing materials. The Modern Language Association noted that while some of its members had shown how computers could transform the making of concordances and bibliographies, those techniques remained foreign to most members. It recommended large-scale support for computer training. Classicists were excited about the new possibilities that computers might introduce for their discipline beyond the creation of indexes. “Obviously, it cannot be foreseen what novelties the future will produce,” wrote the American Philological Society, “but it can be confidently assumed that Americans will continue to provide refutation of the venerable canard that ‘after two thousand years there is nothing more to be done in Classics.’”

The digital humanities community responded to NEH’s call for grants, resulting in the Endowment funding at least five digital humanities projects during its first full year of operation. (It’s not always clear from the titles if a project uses computers, so the tally could be higher.) In 1967, NEH made 412 grants. Of those, 285 were fellowships or summer stipends for individuals. The remaining 127 were divided between “research and publication programs” and “educational and special projects.” Digital humanities projects fell into this second category, accounting for 4 percent of non- fellowship and stipend grants.

Franklin B. Zimmerman, a musicologist at Dartmouth, proposed using computers to aid his research on Baroque composers Henry Purcell, George Frideric Handel, and Claudio Monteverdi. Douglass Adair, an intellectual historian and former editor of William and Mary Quarterly, wanted to use statistical methods to resolve authorship questions surrounding Edmund Burke’s work. Clarence C. Mondale, professor at George Washington University, sought to develop a “computer-stored” bibliography of works in American Studies. Parrish received a grant to apply his pioneering techniques for concordances to the works of Ben Jonson, Andrew Marvell, Alexander Pope, and Jonathan Swift.

Also in that first batch of grants was funding for a conference “to explore the potential application of computer science to the advancement of research in the humanities.” The conference, known as the EDUCOM Symposium, was held in June 1967. In addition to the usual presentations about projects in progress and completed, there was talk about where the digital humanities would go next. With the help of a computer, scholars could now accomplish in a month what had previously taken decades. What was possible if scholars asked computers to move beyond counting and sorting?

Norman Holland, a professor at SUNY–Buffalo, attempted to capture the conversations in an article for Computers in the Humanities. One of the ideas that participants discussed was a proto-Wikipedia-JSTOR hybrid: “A related possibility we discussed was the creation of an encyclopedia by the storage of immense amounts of data at various points around the country with consoles at a much greater number of points, consoles which would deliver anything from a once-over-lightly summary of a given subject to a full bibliography and print-outs of scholarly articles on one aspect.” They also talked about creating a network to connect scholars in different parts of the country and world for online collaborations. “Just five years ago no humanistic scholar would have dreamed such things. They all have a kind of gee-gosh, science-fiction quality,” wrote Holland. That digital humanists were even thinking about on-demand encyclopedias or online collaborations is mind-blowing when you stop to consider that the first message sent on ARPANET, the precursor to the Internet, was still two years away. Science fiction, indeed.

WE’RE ALL DIGITAL HUMANISTS NOW

The story of the confluence of NEH and the early years of the digital humanities may have remained locked in the past if not for a digitization program under way at the Endowment. Since 1980, NEH has used computers to manage all of its grant data. We started with a flat-file database running on a Wang minicomputer, progressing to our current Microsoft SQL database server. The grant database is searchable on our website and the XML files can be downloaded from Data.gov as part of the White House’s Open Data Initiative.

What about the records before 1980? They are on paper. The first grant for the papyrology conference was logged on a blue index card with an 8½-by-11-inch data sheet stapled to it. Realizing that system wouldn’t work for long, NEH opted to use McBee KeySort cards. The square cards were printed in such a way that punch holes (1/8” wide) could be made in select spots, allowing the user to pull out relevant cards from a stack by use of a long “needle.” Think of it as a keyword sort on paper. Knowing that NEH has a fantastic history lurking in the records before 1980, the Office of Digital Humanities, Office of Information Resource Management, and the Office of Planning and Budget have joined forces to digitize the old records, inputting the information into a database. It’s a time-consuming process—not unlike preparing punch cards for concordances or indexes.

When we started looking through the grants, we were surprised to see so many examples of the digital humanities in the agency’s infancy. Okay, we were shocked. It was a piece of our history that had been lost. As the digitization project progresses, we hope to uncover even more digital humanities projects, along with support for other fields that may have been obscured over time.