ODH Project Director Q&A: Mary Flanagan

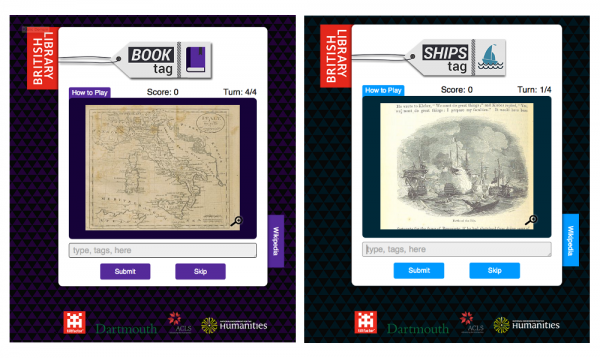

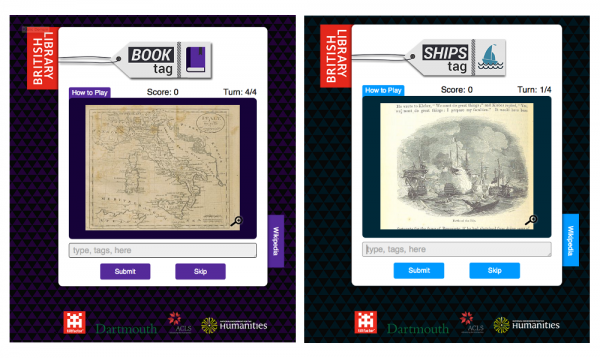

Metadata Games volunteers can contribute information about British Library collections.

Metadata Games volunteers can contribute information about British Library collections.

Later this week, over 60 leaders in crowdsourcing across the humanities, sciences, and cultural heritage domains will flock to the University of Maryland-College Park for a 3-day workshop to share lessons learned and establish priorities for moving forward with this important work. In anticipation of this event, we've published a recent interview below with Mary Flanagan, the workshop's lead organizer, on her NEH-funded Metadata Games project. Flanagan is Sherman Fairchild Distinguished Professor in Digital Humanities and director of Tiltfactor Lab at Dartmouth College.

Metadata Games, which has received both Start-Up and Implementation Grants from the Office of Digital Humanities, is an open-source crowdsourcing game platform used to collect high quality data on images, video and sound collections of cultural institutions. Developed by Tiltfactor, Dartmouth College’s game laboratory, Metadata Games works to make tagging museum and archival collections fun and interactive through a series of different games. Metadata Game has been used by 10 institutions, such as the British Library, the American Antiquarian Society, and Digital Public Library of America, to tag over 44 different collections.

ODH: How did the idea for Metadata Games originate?

Mary Flanagan: Metadata Games originated from ideas about connected data and inspiration from colleagues. Years ago I had heard Luis von Ahn speak and he had showed his crowdsourcing game ESP, which was a version of Taboo for computers that was used to gather information. At the time I was teaching a game design class, and one of my students was making a game about the spring whaling celebration from her Native American community in Alaska. I had been working with our astute college archivist Peter Carini on some teaching materials and was quite confounded that visual materials we had at our Rauner Archive were not coded similarly to, say, images at the Alaskan Archives. Our metadata for an image had one set of metadata, and Alaska’s image was assigned quite another set. How could these images “talk” to each other? Could they be connected if they each possessed more metadata? Could communities in the know add to what is known about these images? And how could such a process be any fun? So Metadata Games was born.

The system is divided into games that act as plug-ins to a backend database that can gather all the player-input tags. It allows editing, sorting, import and export. We also save player login info and scores!

We have ongoing tagging through gameplay that’s always available, and also hold tagging events.

ODH: How is Metadata Games different or more effective than other crowdsourced tagging projects?

Mary Flanagan: Metadata Games is great because it provides accurate data for free to national and even international libraries and archives. Players produce masses of data.

[The platform] is used with over 44 Collections represented at 10 Institutions. It includes tens of thousands of media items (images, audio, and video) that have generated 150,000 tags, with even outlier images garnering nearly 100 tags each.

We have games that collect data, and also games that focus on tag verification in order to “clean up” the data and verify its accuracy, further enhancing the quality of the system. Right now we have the most connectivity and flexibility of any crowdsourcing system for importing and exporting data.

ODH: What does your collaboration with the British Library look like and what are some of the other organizations that are using Metadata Games to tag their collections?

Mary Flanagan: We worked with the British Library (BL) to create three variations of a simple Metadata Game so that they could collect data on their massive store of public domain images. Each game has BL's institutional branding and focuses on a particular subset of the one million public domain images released last year (images of book covers, ships, and portraits). For the BL games we also include a Wikipedia search tab that players can use to search for more relevant tags and thus earn higher scores.

Other organizations using Metadata Games to tag their collections simply use the base set of games developed in the course of the project. A few of our user groups include the Sterling and Francine Clark Art Institute Library, American Antiquarian Society, and University of Edinburgh’s Library and University Collections. We're in the process of including collections from the United States Holocaust Memorial Museum also.

ODH: Tell me about some of the different games that are part of Metadata Games? How are the different games suited to tagging different types of collections?

Mary Flanagan: When groups or institutions set up Metadata Games, they can choose what kinds of games to use from our suite of games. Each is quite different so that it appeals to a range of player interests and playing styles. For example, Zen Tag is a casual one-player game that is primarily focused on lots of tagging. It is more portal than game but institutions find that it generates a great deal of tags. One Up is completely different: it is a competitive two-player game for mobile phones. Stupid Robot is a browser based competitive one-player game which functions in tag verification. NexTag is designed to tag audio and video artifacts, albeit not through time at this point. That’s on the horizon, as are transcription games.

ODH: What are the challenges to crowdsourcing tagging? What are the benefits?

Mary Flanagan: Some of the challenges include incentivizing a user to submit accurate tags, making their contributions feel valuable (they are!), and how to verify user-generated tags. The benefits are tapping into the "wisdom of the crowd" to quickly describe collections, as well as provide nuanced descriptions and improved search results, and increasing user engagement with cultural heritage collections. Crowdsourcing tags also provides researchers with novel data sets.

Metadata Games was also a recipient of a Start-Up Grant. How has the project been expanded or developed with the Implementation Grant?

Mary Flanagan: A Start-Up grant is great for testing out and proving your ideas; the Implementation Grant then allowed us to stabilize and expand the base platform for anyone to use. We created additional game plug-ins that could be played on mobile devices (Pyramid Tag, One Up), and have the ability to tag audio and video collections (NexTag). We also created a game focused on tag verification in order to “clean up” the data and verify its accuracy, further enhancing the quality of the system (Stupid Robot). If we want to get a little technical: during the implementation grant, we split the Metadata Games system into two web applications: a Content and a Game Application. The Metadata Games platform is designed such that multiple Content Apps connect to a central Game App. This gives institutions much more flexibility in how Metadata Games is implemented. Players can play with a wide variety of media, while content-supplying institutions retain full control over what collections are accessible to the platform. The system includes a prototype Natural Language Processing (NLP) framework for filtering tag submissions during gameplay and afterwards. A frequently requested feature was the ability to export the tags in a schema such as MODS, so we implemented this along with more robust import and export features.

Notes: Minor edits for length and clarity have been made to the transcript of this interview.